1. Overview

Domain Purpose:

The Data API provides a standardized way of accessing data which is stored in various databases or data sources designed to be scalable in order to handle large volumes of data requests and flexible to accommodate various use cases and integration needs. The Data API was developed to support customer securely access and consume large volumes of data from the Youforce Data Warehouse through an asynchronous, scalable job-based model.

Typical Use Cases:

- Consume data from the Youforce Data Warehouse.

- Retrieve information to facilitate the sharing of accountability data between healthcare providers and information-requesting parties.

- Retrieve big amounts of information in an efficient way.

Base URL:

https://data.youforce.com/data/v1/

Developer Portal: Reference in the Developer portal to the Data API

Swagger page:

For more detailed information about the endpoints available in this API, please visit the Youforce Data API Swagger page

Postman collection:

For a complete example, you can check our Postman collection

2. Introduction to the basics

In this section, you will find clear definitions and short explanations of key terms and usage of this API, ensuring a common understanding to effectively function.

Key concepts

Let’s start explaining the key concepts that we will use during the rest of the documentation:

- Job: A “job” is a background process for loading data from a specific table into intermediate storage for streaming as a document upon completion in the selected format.

- Operation: An “operation” is a group of jobs, designed to simplify the management of multiple tables for customers who require consistent data sets.

How does this api work?

The system moved away from the typical entity-specific endpoints toward a catalog-driven model where customers define what they need using a configuration ID.

So to start using this API, you need to have a configuration created. In this configuration, it will be stated which specific data a consumer can retrieve from the reporting tables for a given tenant: you can select exactly which columns/fields per table to include in the response, allowing to fetch only the information really needed.

There can exist several configurations per tenant due to the fact that configurations are hardcoded and tied to a client Id, tenant Id and configuration Id to support several scenarios where a third party might consume data from multiple tenants. This allows customers to have a default configuration for regular data retrieval and create additional configurations for specific data sets or fields.

After this configuration has been created and access has been granted through Visma Connect, you can get a token to authenticate as a consumer.

Data API uses an asynchronous request pattern, where each request will be translated into an operation that is scheduled internally, processed in the background, and store the data in a temporary file storage for download in various formats (JSON, CSV). Due to the fact that customers can configure multiple tables together, when they trigger an operation, several jobs - one per table - will be scheduled in a background process. The integrator can monitor their collective status through a status endpoint, eliminating the need to manage individual jobs separately.

We also introduced the “metadata” endpoint, which allows customers to check to which tables and fields they have access, noting that only configured fields would be retrieved and displayed.

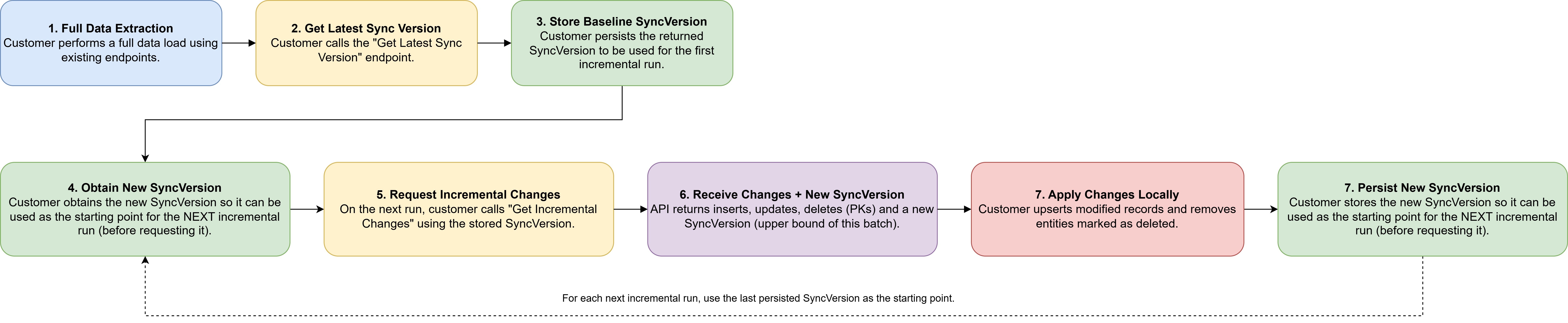

3. Customer Synchronization Workflow

Initial Setup:

- 1.- Customer performs a full extraction using existing endpoints.

- 2.- Customer calls Get Latest Sync Version.

- 3.- Customer stores this version as baseline.

Recurring Incremental Updates

- 1.- Customer obtains the new sync version.

- 2.- Customer calls Get Incremental Changes passing the previously stored sync version.

- 3.- API returns:

- Updated records

- Inserted records

- Deleted PKs

- A new SyncVersion snapshot

- 4.-Customer processes changes:

- Upsert modified entities

- Delete entities marked as removed

- 5.- Customer stores the new sync version.

4. Security and Scopes

Our API implements several security mechanisms designed to ensure the privacy of consumers and the integrity of their data. This section highlights key features that safeguard user information:

- Add access restrictions to the data collected so it is only accessible by some groups through the internal network.

- Encryption of stored files. The files downloaded correctly in the api won’t be encrypted.

- Support DPoP (Demonstrating Proof of Possession), an advanced security mechanism that helps to prevent token theft and replay attacks by cryptographically binding access tokens to a specific client instance.

If you want more information regarding security, please, check our Security section

A layered authorization structure was introduced in the form of scopes, to ensure both security and scalability:

| Scope | Description | Endpoints |

|---|---|---|

| youforce-data-api:metadata:read | Metadata Read Access | GET /data/v1/Metadata |

| youforce-data-api:data:read | Data Read/Download Access | GET /data/v1/jobs/{jobId} |

| youforce-data-api:jobs:manage | FullData/Incrementals Operation Management Access | POST /data/v1/fulldata PUT /data/v1/fulldata/{operationId}/retry POST /data/v1/fulldata/{operationId}/cancel DELETE /data/v1/fulldata/{operationId} GET /data/v1/fulldata/{operationId}/status |

5. Good practices and possible errors

- There can only be one default configuration per tenant, clientId and configuration id.

- In the Data API, if the 'ConfigurationId' parameter is not provided, the API will automatically use the default configuration associated with the cliendId/tenant.

- Remember: configure the correct scopes to operate with this API.

6. Limitations

In order to ensure optimal performance, security and resource management, some limitations have been implemented. These limitations are necessary to prevent misuse, ensure fair usage and protect the system from potential overload, which could impact service availability.

- Each client can trigger one Operation at the same time. If a client that has an Operation running, tries to trigger another request, the system will return a 409 error.

- Each client can trigger up to 3 Operations per day.